File system fragmentation

In computing, file system fragmentation, sometimes called file system aging, is the tendency of a file system to lay out the contents of files non-continuously to allow in-place modification of their contents. It is a special case of data fragmentation. File system fragmentation negatively impacts seek time in spinning storage media, which is known to hinder throughput. Fragmentation can be remedied by re-organizing files and free space back into contiguous areas, a process called defragmentation.

Solid-state drives do not physically seek, so their non-sequential data access is hundreds of times faster than moving drives, making fragmentation less of an issue. It is recommended to not manually defragment solid-state storage, because this can prematurely wear drives via unnecessary write–erase operations.[1]

Causes

[edit]When a file system is first initialized on a partition, it contains only a few small internal structures and is otherwise one contiguous block of empty space.[a] This means that the file system is able to place newly created files anywhere on the partition. For some time after creation, files can be laid out near-optimally. When the operating system and applications are installed or archives are unpacked, separate files end up occurring sequentially so related files are positioned close to each other.

As existing files are deleted or truncated, new regions of free space are created. When existing files are appended to, it is often impossible to resume the write exactly where the file used to end, as another file may already be allocated there; thus, a new fragment has to be allocated. As time goes on, and the same factors are continuously present, free space as well as frequently appended files tend to fragment more. Shorter regions of free space also mean that the file system is no longer able to allocate new files contiguously, and has to break them into fragments. This is especially true when the file system becomes full and large contiguous regions of free space are unavailable.

Example

[edit]

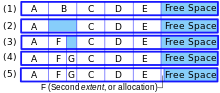

The following example is a simplification of an otherwise complicated subject. Consider the following scenario: A new disk has had five files, named A, B, C, D and E, saved continuously and sequentially in that order. Each file is using 10 blocks of space. (Here, the block size is unimportant.) The remainder of the disk space is one free block. Thus, additional files can be created and saved after the file E.

If the file B is deleted, a second region of ten blocks of free space is created, and the disk becomes fragmented. The empty space is simply left there, marked as and available for later use, then used again as needed.[b] The file system could defragment the disk immediately after a deletion, but doing so would incur a severe performance penalty at unpredictable times.

Now, a new file called F, which requires seven blocks of space, can be placed into the first seven blocks of the newly freed space formerly holding the file B, and the three blocks following it will remain available. If another new file called G, which needs only three blocks, is added, it could then occupy the space after F and before C.

If subsequently F needs to be expanded, since the space immediately following it is occupied, there are three options for the file system:

- Adding a new block somewhere else and indicating that F has a second extent

- Moving files in the way of the expansion elsewhere, to allow F to remain contiguous

- Moving file F so it can be one contiguous file of the new, larger size

The second option is probably impractical for performance reasons, as is the third when the file is very large. The third option is impossible when there is no single contiguous free space large enough to hold the new file. Thus the usual practice is simply to create an extent somewhere else and chain the new extent onto the old one.

Material added to the end of file F would be part of the same extent. But if there is so much material that no room is available after the last extent, then another extent would have to be created, and so on. Eventually the file system has free segments in many places and some files may be spread over many extents. Access time for those files (or for all files) may become excessively long.

Necessity

[edit]This section is written like a personal reflection, personal essay, or argumentative essay that states a Wikipedia editor's personal feelings or presents an original argument about a topic. (June 2019) |

Some early file systems were unable to fragment files. One such example was the Acorn DFS file system used on the BBC Micro. Due to its inability to fragment files, the error message can't extend would at times appear, and the user would often be unable to save a file even if the disk had adequate space for it.

DFS used a very simple disk structure and files on disk were located only by their length and starting sector. This meant that all files had to exist as a continuous block of sectors and fragmentation was not possible. Using the example in the table above, the attempt to expand file F in step five would have failed on such a system with the can't extend error message. Regardless of how much free space might remain on the disk in total, it was not available to extend the data file.

Standards of error handling at the time were primitive and in any case programs squeezed into the limited memory of the BBC Micro could rarely afford to waste space attempting to handle errors gracefully. Instead, the user would find themselves dumped back at the command prompt with the Can't extend message and all the data which had yet to be appended to the file would be lost. The problem could not be solved by simply checking the free space on the disk beforehand, either. While free space on the disk may exist, the size of the largest contiguous block of free space was not immediately apparent without analyzing the numbers presented by the disk catalog and so would escape the user's notice. In addition, almost all DFS users had previously used cassette file storage, which does not suffer from this error. The upgrade to a floppy disk system was an expensive upgrade, and it was a shock that the upgrade might without warning cause data loss.[2][3]

Types

[edit]File system fragmentation may occur on several levels:

- Fragmentation within individual files

- Free space fragmentation

- The decrease of locality of reference between separate, but related files

- Fragmentation within the data structures or special files reserved for the file system itself

File fragmentation

[edit]Individual file fragmentation occurs when a single file has been broken into multiple pieces (called extents on extent-based file systems). While disk file systems attempt to keep individual files contiguous, this is not often possible without significant performance penalties. File system check and defragmentation tools typically only account for file fragmentation in their "fragmentation percentage" statistic.

Free space fragmentation

[edit]Free (unallocated) space fragmentation occurs when there are several unused areas of the file system where new files or metadata can be written to. Unwanted free space fragmentation is generally caused by deletion or truncation of files, but file systems may also intentionally insert fragments ("bubbles") of free space in order to facilitate extending nearby files (see preventing fragmentation below).

File scattering

[edit]File segmentation, also called related-file fragmentation, or application-level (file) fragmentation, refers to the lack of locality of reference (within the storing medium) between related files. Unlike the previous two types of fragmentation, file scattering is a much more vague concept, as it heavily depends on the access pattern of specific applications. This also makes objectively measuring or estimating it very difficult. However, arguably, it is the most critical type of fragmentation, as studies have found that the most frequently accessed files tend to be small compared to available disk throughput per second.[4]

To avoid related file fragmentation and improve locality of reference (in this case called file contiguity), assumptions or active observations about the operation of applications have to be made. A very frequent assumption made is that it is worthwhile to keep smaller files within a single directory together, and lay them out in the natural file system order. While it is often a reasonable assumption, it does not always hold. For example, an application might read several different files, perhaps in different directories, in exactly the same order they were written. Thus, a file system that simply orders all writes successively, might work faster for the given application.

Data structure fragmentation

[edit]The catalogs or indices used by a file system itself can also become fragmented over time, as the entries they contain are created, changed, or deleted. This is more of a concern when the volume contains a multitude of very small files than when a volume is filled with fewer larger files. Depending on the particular file system design, the files or regions containing that data may also become fragmented (as described above for 'regular' files), regardless of any fragmentation of the actual data records maintained within those files or regions.[5]

For some file systems (such as NTFS[c] and HFS/HFS Plus[6]), the collation/sorting/compaction needed to optimize this data cannot easily occur while the file system is in use.[7]

Negative consequences

[edit]File system fragmentation is more problematic with consumer-grade hard disk drives because of the increasing disparity between sequential access speed and rotational latency (and to a lesser extent seek time) on which file systems are usually placed.[8] Thus, fragmentation is an important problem in file system research and design. The containment of fragmentation not only depends on the on-disk format of the file system, but also heavily on its implementation.[9] File system fragmentation has less performance impact upon solid-state drives, as there is no mechanical seek time involved.[10] However, the file system needs to store additional metadata for each non-contiguous part of the file. Each piece of metadata itself occupies space and requires processing power and processor time. If the maximum fragmentation limit is reached, write requests fail.[10]

In simple file system benchmarks, the fragmentation factor is often omitted, as realistic aging and fragmentation is difficult to model. Rather, for simplicity of comparison, file system benchmarks are often run on empty file systems. Thus, the results may vary heavily from real-life access patterns.[11]

Mitigation

[edit]Several techniques have been developed to fight fragmentation. They can usually be classified into two categories: preemptive and retroactive. Due to the difficulty of predicting access patterns these techniques are most often heuristic in nature and may degrade performance under unexpected workloads.

Preventing fragmentation

[edit]Preemptive techniques attempt to keep fragmentation to a minimum at the time data is being written on the disk. The simplest is appending data to an existing fragment in place where possible, instead of allocating new blocks to a new fragment.

Many of today's file systems attempt to pre-allocate longer chunks, or chunks from different free space fragments, called extents to files that are actively appended to. This largely avoids file fragmentation when several files are concurrently being appended to, thus avoiding their becoming excessively intertwined.[9]

If the final size of a file subject to modification is known, storage for the entire file may be preallocated. For example, the Microsoft Windows swap file (page file) can be resized dynamically under normal operation, and therefore can become highly fragmented. This can be prevented by specifying a page file with the same minimum and maximum sizes, effectively preallocating the entire file.

BitTorrent and other peer-to-peer filesharing applications limit fragmentation by preallocating the full space needed for a file when initiating downloads.[12]

A relatively recent technique is delayed allocation in XFS, HFS+[13] and ZFS; the same technique is also called allocate-on-flush in reiser4 and ext4. When the file system is being written to, file system blocks are reserved, but the locations of specific files are not laid down yet. Later, when the file system is forced to flush changes as a result of memory pressure or a transaction commit, the allocator will have much better knowledge of the files' characteristics. Most file systems with this approach try to flush files in a single directory contiguously. Assuming that multiple reads from a single directory are common, locality of reference is improved.[14] Reiser4 also orders the layout of files according to the directory hash table, so that when files are being accessed in the natural file system order (as dictated by readdir), they are always read sequentially.[15]

Defragmentation

[edit]Retroactive techniques attempt to reduce fragmentation, or the negative effects of fragmentation, after it has occurred. Many file systems provide defragmentation tools, which attempt to reorder fragments of files, and sometimes also decrease their scattering (i.e. improve their contiguity, or locality of reference) by keeping either smaller files in directories, or directory trees, or even file sequences close to each other on the disk.

The HFS Plus file system transparently defragments files that are less than 20 MiB in size and are broken into 8 or more fragments, when the file is being opened.[16]

The now obsolete Commodore Amiga Smart File System (SFS) defragmented itself while the filesystem was in use. The defragmentation process is almost completely stateless (apart from the location it is working on), so that it can be stopped and started instantly. During defragmentation data integrity is ensured for both metadata and normal data.

See also

[edit]Notes

[edit]- ^ Some file systems, such as NTFS and ext2+, might preallocate empty contiguous regions for special purposes.

- ^ The practice of leaving the space occupied by deleted files largely undisturbed is why undelete programs were able to work; they simply recovered the file whose name had been deleted from the directory, but whose contents were still on disk.

- ^ NTFS reserves 12.5% of the volume for the 'MFT zone', but only until that space is needed by other files. (i.e., if the volume ~ever~ becomes more than 87.5% full, an un-fragmented MFT can no longer be guaranteed.)[5]

References

[edit]- ^ Fisher, Ryan (2022-02-11). "Should I defrag my SSD?". PC Gamer. Archived from the original on 2022-02-18. Retrieved 2022-04-26.

- ^ http://www.8bs.com/hints/083.txt - Description of the can't extend error

- ^ http://8bs.com/mag/1to4/basegd1.txt - Possible data loss caused by the can't extend error

- ^ Douceur, John R.; Bolosky, William J. (June 1999). "A Large-Scale Study of File-System Contents". ACM SIGMETRICS Performance Evaluation Review. 27 (1): 59–70. doi:10.1145/301464.301480.

- ^ a b "How NTFS reserves space for its Master File Table (MFT)". learn.microsoft.com. Microsoft. Retrieved 22 October 2022.

- ^ "DiskWarrior in Depth". Alsoft. Retrieved 22 October 2022.

- ^ "Maintaining Windows 2000 Peak Performance Through Defragmentation". learn.microsoft.com. Microsoft. Retrieved 22 October 2022.

- ^ Kryder, Mark H. (2006-04-03). Future Storage Technologies: A Look Beyond the Horizon (PDF). Storage Networking World conference. Seagate Technology. Archived from the original (PDF) on 17 July 2006.

- ^ a b McVoy, L. W.; Kleiman, S. R. (Winter 1991). "Extent-like Performance from a UNIX File System" (PostScript). Proceedings of USENIX winter '91. Dallas, Texas: Sun Microsystems, Inc. pp. 33–43. Retrieved 2006-12-14.

- ^ a b Hanselman, Scott (3 December 2014). "The real and complete story - Does Windows defragment your SSD?". Scott Hanselman's blog.

- ^ Smith, Keith Arnold (January 2001). "Workload-Specific File System Benchmarks" (PDF). Cambridge, Massachusetts: Harvard University. Archived from the original (PDF) on 2004-11-17. Retrieved 2006-12-14.

{{cite journal}}: Cite journal requires|journal=(help) - ^ Layton, Jeffrey (29 March 2009). "From ext3 to ext4: An Interview with Theodore Ts'o". Linux Magazine. QuinStreet. Archived from the original on April 1, 2009.

{{cite journal}}: CS1 maint: unfit URL (link) - ^ Singh, Amit (May 2004). "Fragmentation in HFS Plus Volumes". Mac OS X Internals. Archived from the original on 2012-11-18. Retrieved 2009-10-27.

- ^ Sweeney, Adam; Doucette, Doug; Hu, Wei; Anderson, Curtis; Nishimoto, Mike; Peck, Geoff (January 1996). "Scalability in the XFS File System" (PDF). Proceedings of the USENIX 1996 Annual Technical Conference. San Diego, California: Silicon Graphics. Retrieved 2006-12-14.

- ^ Reiser, Hans (2006-02-06). "The Reiser4 Filesystem". Google TechTalks. Archived from the original on 19 May 2011. Retrieved 2006-12-14.

- ^ Singh, Amit (2007). "12 The HFS Plus File System". Mac OS X Internals: A Systems Approach. Addison Wesley. ISBN 0321278542.

Further reading

[edit]- Smith, Keith; Seltzer, Margo. File Layout and File System Performance (PDF) (Paper). Harvard University.